Research line

Mobile Robotics and Intelligent Systems

The research activities of the MOBILE ROBOTICS line are aimed to endow mobile robots and ubiquitous computing devices the necessary skills to aid humans in everyday life activities. These skills range from pure perceptual activities such as tracking, recognition or situation awareness, to motion skills, such as localization, mapping, autonomous navigation, path planning or exploration. ❯ See our presentation video ❯

Head of line: Alberto Sanfeliu Cortés

Research areas

>> Urban service robotics

>> Social robotics

>> Robot localization and robot navigation

>> SLAM and robot exploration

>> Tracking in computer vision

>> Object recognition

Tech. transfer

Our activity finds applications in several fields through collaboration with our technological partners

Research projects

We carry out projects from national and international research programmes.

→ More about our research projects

<< Back to Mobile Robotics and Intelligent Systems main page

Urban service robotics

The group focuses on the design and development of service mobile robots for human assistance and human robot interaction. This includes research on novel hardware and software solutions to urban robotic services such as surveillance, exploration, cleaning, transportation, human tracking, human assistance and human guiding.

Social robotics

The group's work on social robotics has an emphasis in human robot interaction and collaboration, developing new techniques to predict and learn human behaviors, human-robot task collaboration, and the generation of emphatic robot behaviors using all types of sensors, computer vision techniques and cognitive systems technologies.

Robot localization and robot navigation

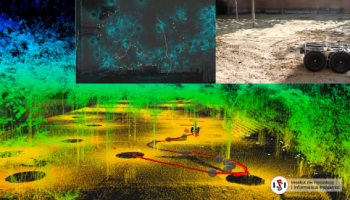

This research area tackles the creation of robust single and cooperative, indoor and outdoor robot localization solutions, using multiple sensor modalities such as GPS, computer vision and laser range finding, INS sensors and raw odometry. The area also seeks methods and algorithms for autonomous robot navigation, and robot formation; and the application of these methods on a variety of indoor and outdoor mobile robot platforms.

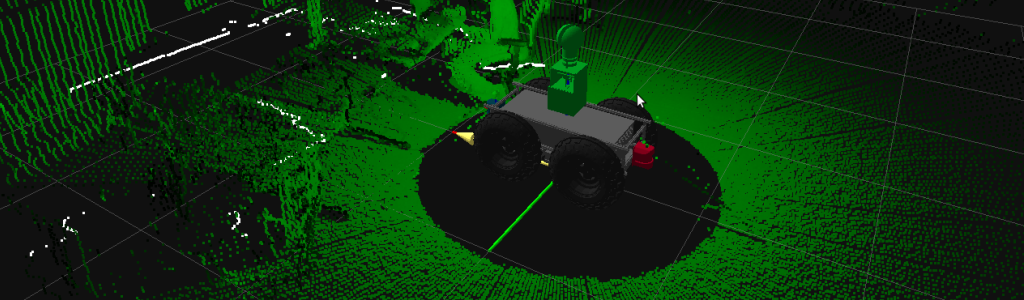

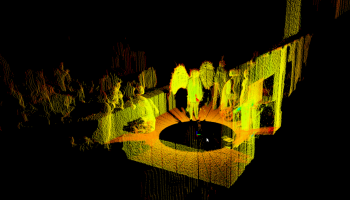

SLAM and robot exploration

We develop solutions for indoor and outdoor simultaneous localization and mapping using computer vision and three-dimensional range data using Bayesian estimation. The research includes the development of new filtering and smoothing algorithms that limit the load of maps using information theoretic measures; as well as the design and construction of novel sensors for outdoor mapping. This research area also studies methods for autonomous robotic exploration.

Tracking in computer vision

We study the development of robust algorithms for the detection and tracking of human activities in indoor and outdoor areas, with applications to service robotics, surveillance, and human-robot interaction. This includes the development of fixed/moving single camera tracking algorithms as well as detection and tracking methods over large camera sensor networks.

Object recognition

The group also performs research on object detection and object recognition in computer vision. Current research is heavily based on boosting and other machine learning methodologies that make extensive use of multiple view geometry. We also study the development of unique feature and scene descriptors, invariant to changes in illumination, cast shadows, or deformations.

These are the latest research projects of the Mobile Robotics and Intelligent Systems research line:

-

TRIFFID: auTonomous Robotic aId For increasing FIrst responDers efficiency

European Project

Start Date: 01/10/2024

-

CANOPIES: A Collaborative Paradigm for Human Workers and Multi-Robot Teams in Precision Agriculture Systems

European Project

Start Date: 01/01/2021

-

LOGISMILE 2023: Last mile logistics for autonomous goods delivery

European Project

Start Date: 01/05/2023

-

LOGISMILE: Last mile logistics for autonomous goods delivery

European Project

Start Date: 01/01/2022

-

TERRINET: The European robotics research infrastructure network

European Project

Start Date: 01/12/2017

-

SciRoc: European robotics league plus smart cities robot competitions

European Project

Start Date: 01/02/2018

-

GAUSS: Galileo-EGNOS as an asset for UTM safety and security

European Project

Start Date: 01/03/2018

-

ROBOCOM++: Rethinking robotics for the robot companion of the future

European Project

Start Date: 01/03/2017

-

AEROARMS: AErial RObotics System integrating multiple ARMS and advanced manipulation capabilities for inspection and maintenance

European Project

Start Date: 01/06/2015

-

LOGIMATIC: Tight integration of EGNSS and on-board sensors for port vehicle automation

European Project

Start Date: 01/03/2016

-

ECHORD++: European Clearing House for Open Robotics Development Plus Plus

European Project

Start Date: 01/10/2013

-

Cargo-ANTS: Cargo handling by Automated Next generation Transportation Systems for ports and terminals

European Project

Start Date: 01/09/2013

-

LENA: Lifelong navigation learning using human-robot interaction

National Project

Start Date: 01/09/2023

-

RAADICAL: Actividades asistidas por robots en el cuidado diario y la vida

National Project

Start Date: 01/09/2021

-

ROCOTRANSP: Robot-human collaboration for goods transportation and delivery

National Project

Start Date: 01/06/2020

-

ColRobTransp: Colaboración robots-humanos para el transporte de productos en zonas urbanas

National Project

Start Date: 30/12/2016

-

Robot-Int-Coop: Robot-Human Interaction, Cooperation and Learning in Urban Areas

National Project

Start Date: 01/01/2014

-

PAU+: Perception and Action in Robotics Problems with Large State Spaces

National Project

Start Date: 01/01/2012

-

MIPRCV: CONSOLIDER-INGENIO 2010 Multimodal interaction in pattern recognition and computer vision

National Project

Start Date: 01/10/2007

-

C-URUS: Ayuda complementaria al proyecto europeo IST-STREP URUS

National Project

Start Date: 01/01/2008

-

NAVROB: Integration of robust perception, learning, and navigation systems in mobile robotics

National Project

Start Date: 13/12/2004

-

ANNA: Supervised learning of industrial scenes by means of an active vision equipped mobile robot.

National Project

Start Date: 28/12/2001

-

MARCO: Active vision systems based in automatic learning for industrial applications

National Project

Start Date: 01/12/1998

-

ALLAUS: Sistema i dispositiu de localització a distància de víctimes d’allau.

CSIC Project

Start Date: 01/03/2025

-

DIMECO: Diseño, mecanizado y construcción de piezas y mecanismos robóticos

CSIC Project

Start Date: 01/01/2018

-

MELPC: Mejora de las estaciones de trabajo del Laboratorio de Pilas de Combustible

CSIC Project

Start Date: 01/10/2018

-

OTDIRI: Apoyo a las actividades de la oficina de transferencia y divulgación del IRI

CSIC Project

Start Date: 01/05/2018

-

COLAROB: Colaboración hombre-robot en tareas de manipulación, navegación y exploración

CSIC Project

Start Date: 01/04/2013

-

HandIA: Nueva herramienta de rehabilitación basada en IA para estimación de la posición de la mano interactuando con objetos

Regional Project

Start Date: 01/03/2025

-

BotNet: Nou model de repartiment de paquets en superilles urbanes mitjançant una xarxa de vehicles elèctrics autònoms

Regional Project

Start Date: 10/11/2023

-

SGR VIS: Grup consolidat de Visió Artificial i Sistemes Intel·ligents

Regional Project

Start Date: 01/01/2017

-

STAFF: Desenvolupament d'un sistema de transport automàtic i flexible entre llocs de treball del centre de fabricació flexible de la UPC

Regional Project

Start Date: 01/01/1996

-

SOCIAL PIA: Cooperative Social PIA model for Cybernetics Avatars (Moonshot Research and Development Program)

UPC Project

Start Date: 01/04/2023

-

FENILO: Framework for 2D Estimation and Navigation using IMU, Lidar and Odometry

UPC Project

Start Date: 01/07/2020

-

CARNET 2023-3: WEB application for short range ONA2 motions

Technology Transfer Contract

Start Date: 11/07/2023

-

GEPEVE: Gestión predictiva de energía para eficiencia energética en vehículos eléctricos con control de crucero adaptativo

Technology Transfer Contract

Start Date: 19/07/2021

-

CARNET 2023-1: Demonstration and support at the FMDs on site in Ehra/Wolfsburg

Technology Transfer Contract

Start Date: 01/07/2022

-

CARNET 2022-1: Autonomous Delivery Drive. Navegació avançada en zones urbanes

Technology Transfer Contract

Start Date: 22/12/2021

-

DOGS2: Development of an IMU aided AGV Guiding System

Technology Transfer Contract

Start Date: 07/07/2021

-

CARNET 2021-2: Autonomous Driving Challenge 2021 (Fase 2)

Technology Transfer Contract

Start Date: 01/06/2021

-

MODPOW: Desenvolupament de noves tècniques d’exploració en entorns oberts i tancats amb robots

Technology Transfer Contract

Start Date: 09/03/2021

-

CARNET 2021-1: Autonomous Driving Challenge 2021 (Fase 1)

Technology Transfer Contract

Start Date: 03/03/2021

-

CARNET 2020: Sensorización-Navegación plataforma robótica (Fase 2)

Technology Transfer Contract

Start Date: 11/06/2020

-

TRREX-PROMAUT: Tecnologías habilitadoras del robot de rango extendido para la factoría altamente flexible

Technology Transfer Contract

Start Date: 01/01/2019

-

TRREX-DTA: Tecnologías habilitadoras del robot de rango extendido para la factoría altamente flexible

Technology Transfer Contract

Start Date: 01/03/2019

-

CARNET 2019: Sensorización-Navegación plataforma robótica (Fase 1)

Technology Transfer Contract

Start Date: 01/10/2019

-

SMART FACTORY: Investigación en tecnologías habilitadoras de sistemas inteligentes para las fábricas del futuro

Technology Transfer Contract

Start Date: 01/10/2015

-

DITCAV: Assessoria en el desenvolupament i integració de tècniques específiques a l’àrea de la conducció autònoma de vehicles

Technology Transfer Contract

Start Date: 20/07/2018

-

ICCS: Concept for intrinsic camera calibration station

Technology Transfer Contract

Start Date: 01/01/2017

-

#RobòticaiÈtica: Organización y gestión del ciclo "Diálogos sobre robótica y ética"

Technology Transfer Contract

Start Date: 14/09/2018

-

LCAS: Object localization with LIDAR for the validation of a CMS system

Technology Transfer Contract

Start Date: 11/01/2016

-

ADC: Coordinación técnica y soporte para la competición SEAT Autonomous Driving Cup

Technology Transfer Contract

Start Date: 01/07/2017

-

VW_Vehicle-Robot: Desenvolupament/innovació sobre “vehicle-robot”.

Technology Transfer Contract

Start Date: 01/09/2016

-

AIN-IRI: Detección de obstáculos para robots aéreos por medio de técnicas ópticas

Technology Transfer Contract

Start Date: 01/02/2010

-

ICARUS: Treball de consultoria sobre temes d'investigació tecnològica referent al desenvolupament d'un sistema de suport per a la navegació d'un robot mòbil

Technology Transfer Contract

Start Date: 16/10/2006

-

UTILAR II: Consultoria tècnica projecte d'investigació i desenvolupament de nous connectors elèctrics per a lunetes tèrmiques de l'automòbil

Technology Transfer Contract

Start Date: 09/06/2006

-

UTILAR: Disseny d'un sistema automàtic per a pre-soldar terminals de lunetes tèrmiques

Technology Transfer Contract

Start Date: 10/11/2005

These are the most recent publications (2025 - 2024) of the Mobile Robotics and Intelligent Systems

-

A.M. Puig-Pey, B. Amante, Y. Bolea and A. Sanfeliu. A methodological proposal for the integration of robotic services in the urban public space of cities. ACE | Architecture, City and Environment, 20(58): 12591, 2025.

Abstract

Abstract

Info

Info

PDF

PDF

-

A. Santamaria-Navarro, S. Hernández, F. Herrero, A. López, I. del Pino, N.A. Rodríguez, C. Fernandez, A. Baldó, C. Lemardelé, A. Garrell Zulueta, J. Vallvé, H. Taher, A.M. Puig-Pey, L. Pagès and A. Sanfeliu. Towards the deployment of an autonomous last-mile delivery robot in urban areas. IEEE Robotics and Automation Magazine, 2025.

Abstract

Abstract

Info

Info

PDF

PDF

-

P. Vial, J. Solà, N. Palomeras and M. Carreras. On Lie group IMU and linear velocity preintegration for autonomous navigation considering the Earth rotation compensation. IEEE Transactions on Robotics, 41: 1346-1364, 2025.

Abstract

Abstract

Info

Info

PDF

PDF

-

F. Gebelli, L.B. Hriscu, R. Ros, S. Lemaignan, A. Sanfeliu and A. Garrell Zulueta. Personalised explainable robots using LLMs, 2025 ACM/IEEE International Conference on Human-Robot Interaction, 2025, Melbourne, Australia, pp. 1304-1308.

Abstract

Abstract

Info

Info

PDF

PDF

-

F. Gebelli, A. Garrell Zulueta, S. Lemaignan and R. Ros. Dynamics of mental models: Objective vs. subjective user understanding of a robot in the wild. IEEE Robotics and Automation Letters, 10(8): 7755-7762, 2025.

Abstract

Abstract

Info

Info

PDF

PDF

-

A. Garrell Zulueta, I. del Pino, A. Santamaria-Navarro and A. Sanfeliu. Collaborative Transport Robots (CTRs) applicable in the proximal urban environment: A review. ACE | Architecture, City and Environment, 20(58): 12627, 2025.

Abstract

Abstract

Info

Info

PDF

PDF

-

J. Cani, K. Panagiotis, K. Foteinos, I. Kefaloukos, L. Argyriou, M. Falelakis, I. del Pino, A. Santamaria-Navarro, M. Čech, O. Severa, A. Umbrico, F. Fracasso, A. Orlandini, D. Drakoulis, E. Markakis, I. Varlamis and G. Th. Papadopoulos. TRIFFID: Autonomous robotic aid for increasing first responders efficiency, 6th International Conference in Electronic Engineering and Information Technology, 2025, Chania, to appear.

Abstract

Abstract

Info

Info

PDF

PDF

-

P.T. Singamaneni, P. Bachiller, L.J. Manso, A. Garrell Zulueta, A. Sanfeliu, A. Spalanzani and R. Alami. A survey on socially aware robot navigation: taxonomy and future challenges. The International Journal of Robotics Research, 43(10): 1533-1572, 2024.

Abstract

Abstract

Info

Info

PDF

PDF

-

O. Gil. Robot navigation issues and human-robot collaborative search using deep learning methods, 2024 IRI Doctoral Day, 2024, Barcelona, pp. 8.

Abstract

Abstract

Info

Info

PDF

PDF

-

J.E. Domínguez and A. Sanfeliu. Voice Command Recognition for Explicit Intent Elicitation in Collaborative Object Transportation Tasks: a ROS-based Implementation, 2024 ACM/IEEE International Conference on Human-Robot Interaction, 2024, Boulder, CO, USA, pp. 412–416.

Abstract

Abstract

Info

Info

PDF

PDF

-

H. Taher. Deep learning-based scene understanding for urban autonomous navigation, 2024 IRI Doctoral Day, 2024, Barcelona, pp. 17.

Abstract

Abstract

Info

Info

PDF

PDF

-

J.E. Domínguez, N.A. Rodríguez and A. Sanfeliu. Perception–intention–action cycle in human–robot collaborative tasks: The collaborative lightweight object transportation use-case. International Journal of Social Robotics, 2024, to appear.

Abstract

Abstract

Info

Info

PDF

PDF

-

Y. Tian and J. Andrade-Cetto. SDformerFlow: Spiking neural network transformer for event-based optical flow, 27th International Conference on Pattern Recognition, 2024, Kolkata, in Pattern Recognition, Vol 15315 of Lecture Notes in Computer Science, pp. 475-491, 2024, Cham.

Abstract

Abstract

Info

Info

PDF

PDF

-

M. Dalmasso, V. Sanchez-Anguix, A. Garrell Zulueta, P. Jiménez and A. Sanfeliu. Exploring Preferences in Human-Robot Navigation Plan Proposal Representation, 2024 ACM/IEEE International Conference on Human-Robot Interaction, 2024, Boulder, CO, USA, pp. 369-373.

Abstract

Abstract

Info

Info

PDF

PDF

-

M. Dalmasso, F. Garcia-Ruiz, T. Ciarfuglia, L. Saraceni, I. M. Motoi, E. Gil and A. Sanfeliu. Human-robot harvesting plan negotiation: Perception, grape mapping and shared planning, 7th Iberian Robotics Conference, 2024, Madrid, Spain, pp. 1-8.

Abstract

Abstract

Info

Info

PDF

PDF

-

J.A. Aguilar, D. Chanal, D. Chamagne, N. Yousfi, M. Péra, A.P. Husar and J. Andrade-Cetto. A hybrid control-oriented PEMFC model based on echo state networks and Gaussian radial basis functions. Energies, 17(2): 508, 2024.

Abstract

Abstract

Info

Info

PDF

PDF

-

D. Natera-de Benito, A. Favata, J. Exposito, R. Gallart, O. Moya, S. Roca, A. Marzabal, L. van Noort, C. Torras, A. Nascimento, J. Medina-Cantillo, R. Pàmies-Vilà and J.M. Font. Advancing upper limb motor function evaluation in DMD and SMA via kinematic parameterization with the wearable device , 2024 Annual Congress of the World Muscle Society, 2024, Prague, pp. 14.

Abstract

Abstract

Info

Info

PDF

PDF

-

F. Gebelli, R. Ros and A. Garrell Zulueta. Participatory design for explainable robots, 2024 Workshop on Explainability for Human-Robot Collaboration at the 2024 ACM/IEEE International Conference on Human-Robot Interaction, 2024, USA.

Abstract

Abstract

Info

Info

PDF

PDF

-

B. Amante, A.M. Puig-Pey, J.L. Zamora, J. Moreno and A. Sanfeliu. Robots in waste management. Detritus. Multidisciplinary Journal for Circular Economy and Sustainable Management of Residues, 29: 179-190, 2024.

Abstract

Abstract

Info

Info

PDF

PDF

-

F. Gebelli, R. Ros, S. Lemaignan and A. Garrell Zulueta. Co-designing explainable robots: a participatory design approach for HRI, 33rd IEEE International Symposium on Robot and Human Interactive Communication, 2024, Pasadena, California, USA, pp. 1564-1570, IEEE.

Abstract

Abstract

Info

Info

PDF

PDF

-

J. Laplaza, F. Moreno-Noguer and A. Sanfeliu. Enhancing robotic collaborative tasks through contextual human motion prediction and intention inference. International Journal of Social Robotics: 1-20, 2024, to appear.

Abstract

Abstract

Info

Info

PDF

PDF

-

B. Morrell, K. Otsu, A. Agha, D. Fan, S. Kim, M.F. Ginting, X. Lei, J. Edlund, S. Fakoorian, A. Bouman, F. Chavez, T. Kim, G.J. Correa, M. Saboia Da Silva, A. Santamaria-Navarro and . et al. An Addendum to NeBula: Towards extending TEAM CoSTAR’s solution to larger scale environments. IEEE Transactions on Field Robotics, 1: 476-526, 2024.

Abstract

Abstract

Info

Info

PDF

PDF

-

S.H. Seo, D.J. Rea, K. Kochigami, T. Kanda, J.E. Young, Y. Nakano, A. Sanfeliu and H. Ishiguro. Symbiotic society with avatars (SSA): Toward empowering social interactions beyond space and time, 2024 ACM/IEEE International Conference on Human-Robot Interaction, 2024, Boulder, CO, USA, pp. 1352-1354.

Abstract

Abstract

Info

Info

PDF

PDF

-

N. Ugrinovic, A. Ruiz, A. Agudo, A. Sanfeliu and F. Moreno-Noguer. PIRO: Permutation-invariant relational network for multi-person 3D pose estimation, 19th International Conference on Computer Vision Theory and Applications, 2024, Rome (Italy), pp. 295-305.

Abstract

Abstract

Info

Info

PDF

PDF

-

J. Palmieri, P. Di Lillo, A. Sanfeliu and A. Marino. Perception-driven shared control architecture for agricultural robots performing harvesting tasks, 2024 IEEE/RSJ International Conference on Intelligent Robots and Systems, 2024, Abu Dhabi, UAE, pp. 9328-9334.

Abstract

Abstract

Info

Info

PDF

PDF

-

J.E. Domínguez and A. Sanfeliu. Exploring transformers and visual transformers for force prediction in human-robot collaborative transportation tasks, 2024 IEEE International Conference on Robotics and Automation, 2024, Yokohama (Japan), pp. 3191-3197.

Abstract

Abstract

Info

Info

PDF

PDF

-

P. Falqueto, A. Sanfeliu, L. Palopoli and D. Fontanelli. Learning priors of human motion with vision transformers, 48th IEEE International Conference on Computers, Software, and Applications , 2024, Osaka, Japan, pp. 382-389.

Abstract

Abstract

Info

Info

PDF

PDF

-

J.E. Domínguez and A. Sanfeliu. Anticipation and proactivity. Unraveling both concepts in human-robot interaction through a handover example, 33rd IEEE International Symposium on Robot and Human Interactive Communication, 2024, Pasadena, California, USA, pp. 957-962, IEEE.

Abstract

Abstract

Info

Info

PDF

PDF

-

O. Gil and A. Sanfeliu. Human-robot collaborative minimum time search through sub-priors in ant colony optimization. IEEE Robotics and Automation Letters, 9(11): 10216-10223, 2024.

Abstract

Abstract

Info

Info

PDF

PDF

-

E. Repiso, A. Garrell Zulueta and A. Sanfeliu. Adaptive social planner to accompany people in real-life dynamic environments. International Journal of Social Robotics, 16: 1189-1221, 2024.

Abstract

Abstract

Info

Info

PDF

PDF

-

J.E. Domínguez and A. Sanfeliu. Force and velocity prediction in human-robot collaborative transportation tasks through video retentive networks, 2024 IEEE/RSJ International Conference on Intelligent Robots and Systems, 2024, Abu Dhabi, UAE, pp. 9307-9313.

Abstract

Abstract

Info

Info

PDF

PDF

-

I. del Pino, A. Santamaria-Navarro, A. Garrell Zulueta, F. Torres and J. Andrade-Cetto. Probabilistic graph-based real-time ground segmentation for urban robotics. IEEE Transactions on Intelligent Vehicles, 9(5): 4989-5002, 2024.

Abstract

Abstract

Info

Info

PDF

PDF

-

J. Laplaza, J.J. Oliver, A. Sanfeliu and A. Garrell Zulueta. Body gestures recognition for social human-robot interaction, 7th Iberian Robotics Conference, 2024, Madrid, Spain.

Abstract

Abstract

Info

Info

PDF

PDF

-

M. Dalmasso, J.E. Domínguez, I.J. Torres, P. Jiménez, A. Garrell Zulueta and A. Sanfeliu. Shared task representation for human–robot collaborative navigation: The collaborative search case. International Journal of Social Robotics, 16: 145-171, 2024.

Abstract

Abstract

Info

Info

PDF

PDF

-

F. Gebelli, R. Ros, S. Lemaignan and A. Garrell Zulueta. Evaluating the impact of explainability on the users’ mental models of robots over time, 33rd IEEE International Symposium on Robot and Human Interactive Communication, 2024, Pasadena, California, USA, IEEE.

Abstract

Abstract

Info

Info

PDF

PDF

-

P. Vial, N. Palomeras, J. Solà and M. Carreras. Underwater Pose SLAM using GMM scan matching for a mechanical profiling sonar. Journal of Field Robotics, 41: 511-538, 2024.

Abstract

Abstract

Info

Info

PDF

PDF

-

J.E. Domínguez. Perception, prediction and planning techniques in collaboration human-robot tasks, 2024 IRI Doctoral Day, 2024, Barcelona, pp. 6.

Abstract

Abstract

Info

Info

PDF

PDF

Mobile Robotics Laboratory

The Mobile Robotics Laboratory is an experimental area primarily devoted to hands-on research with mobile robot devices. The lab includes 3 Pioneer platforms, 2 service robots for urban robotics research based on Segway platforms, and a 4-wheel rough outdoor mobile robot, a six-legged LAURON-III walking robot, and a vast number of sensors and cameras.

Barcelona Robot Laboratory

The Barcelona Robot Lab encompasses an outdoor pedestrian area of 10.000 sq m., and is provided with 21 fixed cameras, a set of heterogeneous robots, full coverage of wifi and mica devices, and partial gps coverage. The area has moderate vegetation and intense cast shadows, making computer vision algorithms more than challenging.

Researchers

-

Andrade Cetto, Juan

cetto (at) iri.upc.edu

cetto (at) iri.upc.edu

-

Bo, Valerio

vbo (at) iri.upc.edu

vbo (at) iri.upc.edu

-

del Pino Bastida, Iván

idelpino (at) iri.upc.edu

idelpino (at) iri.upc.edu

-

Garrell Zulueta, Anaís

agarrell (at) iri.upc.edu

agarrell (at) iri.upc.edu

-

Puig-Pey Claveria, Ana Maria

apuigpey (at) iri.upc.edu

apuigpey (at) iri.upc.edu

-

Sanfeliu Cortés, Alberto

sanfeliu (at) iri.upc.edu

sanfeliu (at) iri.upc.edu

-

Santamaria Navarro, Angel

asantamaria (at) iri.upc.edu

asantamaria (at) iri.upc.edu

-

Solà Ortega, Joan

jsola (at) iri.upc.edu

jsola (at) iri.upc.edu

-

Vallvé Navarro, Joan

jvallve (at) iri.upc.edu

jvallve (at) iri.upc.edu

PhD Students

-

Albardaner Torras, Jaume

jalbardaner (at) iri.upc.edu

jalbardaner (at) iri.upc.edu

-

Bejarano Sepulveda, Edison Jair

ebejarano (at) iri.upc.edu

ebejarano (at) iri.upc.edu

-

Costa Santamaria, Adrià

acostas (at) iri.upc.edu

acostas (at) iri.upc.edu

-

Dalmasso Blanch, Marc

mdalmasso (at) iri.upc.edu

mdalmasso (at) iri.upc.edu

-

De Frutos Arranz, Raquel

rdefrutos (at) iri.upc.edu

rdefrutos (at) iri.upc.edu

-

Gebelli Guinjoan, Ferran

fgebelli (at) iri.upc.edu

fgebelli (at) iri.upc.edu

-

Hriscu Acsinte-Staut, Lavinia Beatrice

lhriscu (at) iri.upc.edu

lhriscu (at) iri.upc.edu

-

Sanches Moura, Mateus

msanches (at) iri.upc.edu

msanches (at) iri.upc.edu

-

Taher, Hafsa

htaher (at) iri.upc.edu

htaher (at) iri.upc.edu

-

Wang, Zhixiong

zhwang (at) iri.upc.edu

zhwang (at) iri.upc.edu

-

Zhang, Na

nzhang (at) iri.upc.edu

nzhang (at) iri.upc.edu

Master Students

-

Carles Pamias, Sara

scarles (at) iri.upc.edu

scarles (at) iri.upc.edu

-

Li, Yunju

yli (at) iri.upc.edu

yli (at) iri.upc.edu

-

Tur Ruiz, Joan

jtur (at) iri.upc.edu

jtur (at) iri.upc.edu

TFG Students

-

Arcos Conchillo, Diego

darcos (at) iri.upc.edu

darcos (at) iri.upc.edu

-

Michalkova, Soña

smichalkova (at) iri.upc.edu

smichalkova (at) iri.upc.edu

-

Minguella Torra, Maria

mminguella (at) iri.upc.edu

mminguella (at) iri.upc.edu

-

Ni, Huilin

hni (at) iri.upc.edu

hni (at) iri.upc.edu

-

Park Gonzalez, Nicolas Gil

npark (at) iri.upc.edu

npark (at) iri.upc.edu

-

Sánchez Gala, Iván

isanchez (at) iri.upc.edu

isanchez (at) iri.upc.edu

-

Zakreva Prykolota, Anna Maria

azakreva (at) iri.upc.edu

azakreva (at) iri.upc.edu

Support Staff

-

Herrero Cotarelo, Fernando

fherrero (at) iri.upc.edu

fherrero (at) iri.upc.edu

-

Martínez Fité, Oriol

omartinez (at) iri.upc.edu

omartinez (at) iri.upc.edu

-

Moreno Borràs, Víctor

vmoreno (at) iri.upc.edu

vmoreno (at) iri.upc.edu

-

Perez Ruiz, Carlos

cperez (at) iri.upc.edu

cperez (at) iri.upc.edu

Follow us!